A-IQ Ready

Quantum Sensing and Artificial Intelligence

The onset of climate change, widespread geopolitical conflicts, and social inequalities make it clear that innovation and change are necessary to create a better world.

Through the A-IQ Ready project (Artificial Intelligence Using Quantum Measured Information for Realtime Distributed Systems at the Edge), 50 project partners from 15 countries (including Offenburg University of Applied Sciences) have joined forces to tackle the problems of our time and the future on a broad front. Equipped with cutting-edge technologies such as quantum sensing and artificial intelligence, the project partners are working across eight supply chains on novel methods to overcome the challenges of the future.

As part of the "Propulsion Health and Availability in Safety-Critical Situations" supply chain, Offenburg University is conducting research on neural networks and machine learning methods to make the electric mobility of the future safer: The development of novel quantum sensor technology will provide insights into the physical behavior of heavily loaded electric motors that were previously hidden from researchers and engineers. This is intended to provide a better understanding of the motor’s physical behavior in the event of faults, as well as to make assessments regarding the State of Health (SOH) and the probability of an imminent failure. Since the exact data relationships are difficult to trace, neural networks at Offenburg University of Applied Sciences are being trained to learn the motor’s behavior based on the sensor data described above. This creates a digital model of the motor that allows us to infer internal states—which would be inaccessible in production-ready motors (without expensive quantum sensors)—based on easily measurable parameters.

Neural networks and machine learning are on everyone's lips—and are undeniably state-of-the-art. What exactly is left to explore?

This question gains significance when we take the next step: How large does a neural network actually need to be to meaningfully learn the available information (so-called training data) and utilize it effectively? How exactly do we even feed the training data to the network? Is it best to provide all the data at once, or rather step by step in small chunks?

And even if a good instinct or a bit of luck has produced a precise neural network, the need for further inquiry is far from obsolete, because: Perhaps there are other configurations that lead to even better results with the same data set? Or ones that are similarly precise but require much less training time?

Hochschule Offenburg explored precisely these questions as part of the AIQ Ready project: For a case study, various configuration options (so-called hyperparameters) were selected and examined for their influence on the quality of fully trained networks and the duration of training. In the process, interesting patterns of effect emerged: Even small differences in the choice of hyperparameters can determine success or failure.

A selected example of this is shown in Fig. 1: The figure displays the responses of two fully trained neural networks (yellow graph for Network 1, blue graph for Network 2, Fig. 1 top) to the same input sequence (three graphs, Fig. 1 bottom). The closer the networks’ output matches the reference result determined by measurement (orange graph, Fig. 1 top), the more precise they are. Both networks have the same architecture and were trained with identical data—only the subdivision of this training data into the aforementioned data chunks differs.

The impact, however, is significant: While the output sequence of Network 1 has absolutely nothing in common with the reference result, Network 2 performs almost identically to the reference. In the context of electric motors, this means: Network 2 mimics the operational behavior of a motor very well and can accordingly be used as a digital model, whereas Network 1 is completely off the mark and therefore unsuitable.

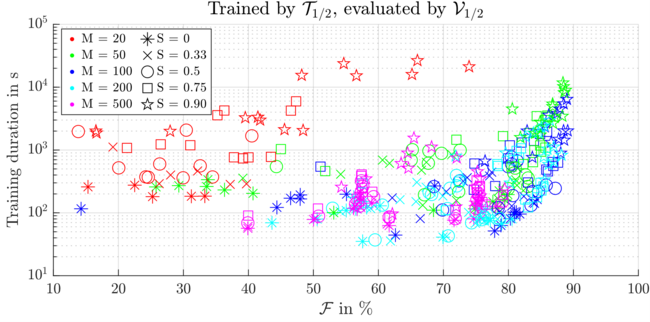

Figure 2: Precision of various neural networks trained using different hyperparameter configurations, plotted against training duration.

Each marker represents a trained and evaluated neural network: The color coding indicates the different batch sizes, and the shape describes the degree of overlap.

An interesting finding from the research is that the size of the networks is of secondary importance for the chosen architecture (to be specific, neural state-space models were used): even a small number of interconnected artificial neurons is sufficient to construct high-performance networks. Once a narrow threshold of too small, unsuitable networks is crossed, even a multiplication of the number of neurons used leads only to marginal improvements.

The investigations also showed that the aforementioned “data chunks” (so-called batches) are of particular significance. Attempting to train on all the data at once is therefore a very bad idea. Too many small chunks, on the other hand, slow down training considerably (red markers, Fig. 2) and also lead to less precise results. A sweet spot was identified for batches with a length of 50–100 data points (turquoise and blue markers, Fig. 2). In this context, another property was investigated: What actually happens when batches are allowed to overlap slightly—that is, to share data points?

Here, the research yielded a very clear result: The more the batches overlap, the better the neural networks trained by them perform! A drawback, however, is that this also increases the training duration, as data points are thereby considered multiple times. Thus, users have adjustment options available that can be set depending on their time constraints and quality requirements.

The detailed results were first presented in 2025 at the International Electric Machines and Drives Conference (IEMDC) in Houston, Texas, and published as a paper by IEEE: https://doi.org/10.1109/IEMDC60492.2025.11061168

Funding Program

A-IQ READY is funded under the Key Digital Technologies Joint Undertaking (KDT JU)—the public-private partnership for research, development, and innovation within Horizon Europe—and by national authorities under Grant Agreement No. 101096658.

Project partners

50 project partners from 15 European countries

Project duration

February 2023 to March 2026